In high volume environments, you may encounter the following error when ingesting data into your Open/Elasticsearch cluster:

Error HTTP 429: Too Many Requests

In NiFi, this would crop up as an uncaught exception:

Digging into the Opensearch and client logs, you’d see errors along the lines of:

Data too large, data for [<http_request>] would be [123mb], which is larger than the limit of [123mb]

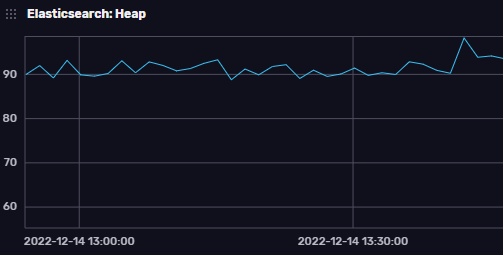

Our first clue is doing a bit of digging around which gives you suggestions to increase the heap memory [1]. Indeed, looking at the heap memory usage of Opensearch, we see consistent high usage of the JVM heap at about 90-97%:

This leads us to a rather helpful article here:

https://aws.amazon.com/premiumsupport/knowledge-center/opensearch-circuit-breaker-exception/

Specifically, the items pertaining to fielddata seem to be exactly what was required in our case:

POST /_all/_cache/clear?fielddata=trueRunning the above frees up a lot of heap memory, and in fact the 429 errors stop. It also points to some tuning that needs to be done in terms of “fielddata”

screenshot showing a decrease in heap usage from approx 90% to approx 60%

You must be logged in to post a comment.